|

Simply point a camera on your face or body, and Adobe Character Animator will be able to detect your most important face/body points, track them, and animate 2D characters in real-time. This motion capture can be done directly on your PC with a laptop or standalone webcam.

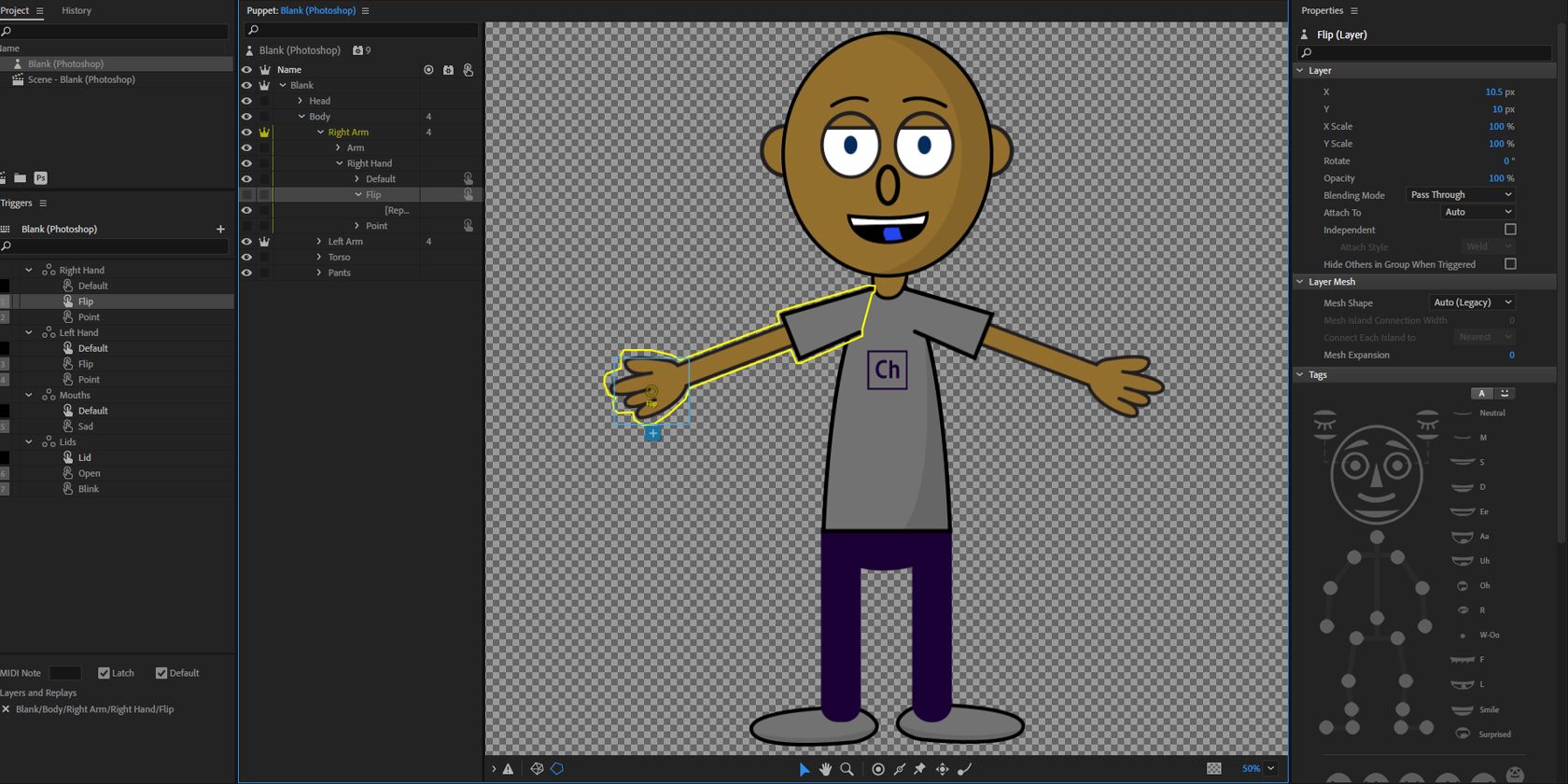

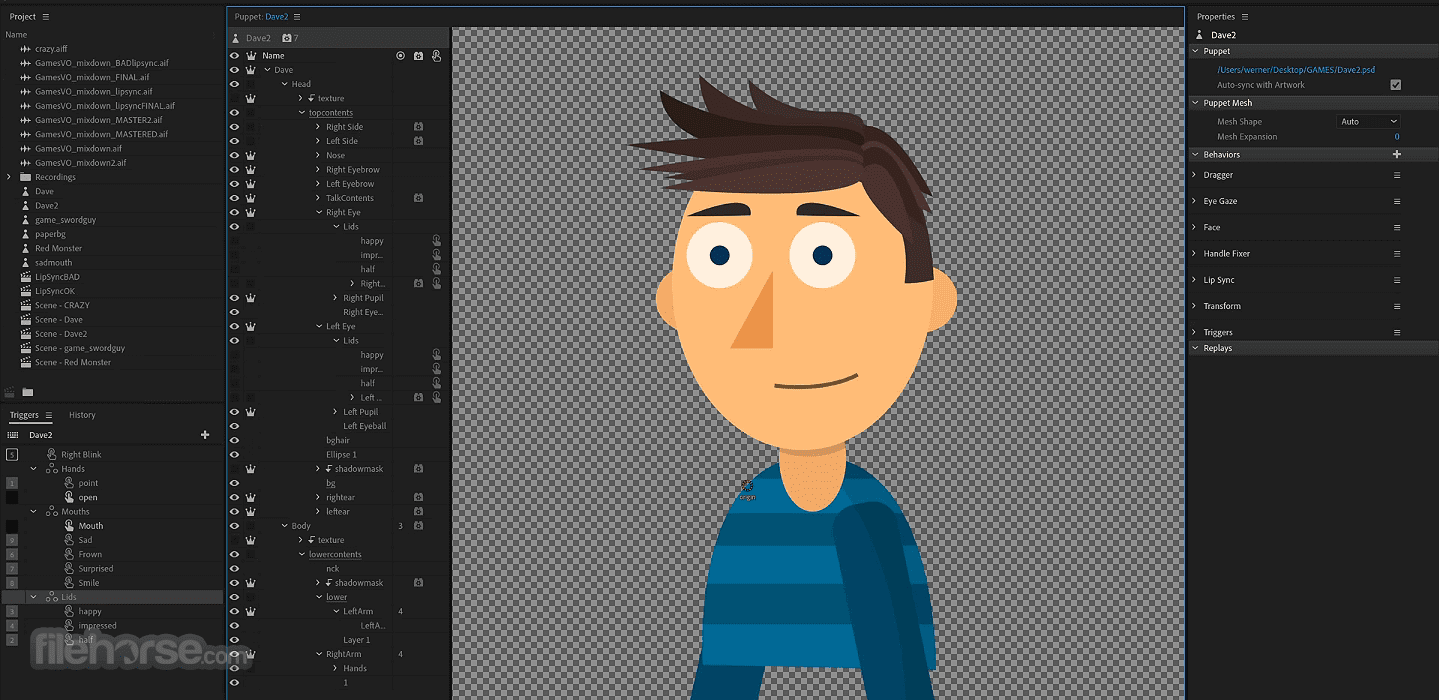

Adobe Character Animator can recognize both facial features in drawings and full-body structures and rig them to be ready for real-time motion capture. Your character creation does not need to realistic, or even to have fully-featured face features. This can be made directly from Photoshop with multi-layered creations, or by importing a finished drawing. To create a fully animated character, you first need to import a drawing. With Adobe Stock Images you can find the perfect image/photo to enhance your next creative project! Special offer: 10 free images! To enable complete control over the end-product, the app provides users access to not only puppet motion editing and capture of live movements via motion-capture, but also comprehensive toolsets for managing scenes, timeline, and more. The core technology found in Character Animator App is the combination of motion-capture tools with a multi-track recording system that can take layered 2D creations from the famous Photoshop or Illustrator apps, transform them into “puppets” with automatic rigging points, and then take control of them, animate them, and assign them specific motion behaviors.

The software’s Starter mode is free to use if you have an Adobe account the full version is available rental-only via Adobe’s All Apps subscriptions, which cost $82.49/month or $599.88/year.Adobe Character Animator is an advanced computer animation application that mixes technologies from several different design fields into a unique format that allows users of all knowledge levels to more easily learn to animate 2D characters and objects created inside the Adobe app ecosystem. In the online documentation, Character Animator 23.1 it also listed as the December 2022 release. The new Motion Library gets a new Strength parameter to control the influence of a mocap move on the movement of the puppet being animated.Ĭharacter Animator is available for Windows 10+ and macOS 11.0+. The update, due today, switches the API used for GPU acceleration from OpenGL to DirectX 12 on Windows and Metal on macOS, improving performance in the Scene and Puppet panels and when rendering to disk.

Updated 6 December 2022: Adobe has announced Character Animator 23.1. The moves, which can be retimed and blended via the software’s timeline, cover movements that would be harder to record live, including dance moves, combat and sports, plus walks, runs and jumps. To that, Character Animator 23.0 adds the Motion Library: a built-in set of over 350 motion-capture moves. New in Character Animator 23.0: built in motion libraryĪlthough initially designed for animating a character’s face and upper body, the software has expanded into full-body animation, with last year’s Character Animator 22.0 adding body tracking. Subsequent updates have added the option to trigger readymade character animations when streaming live, and keyframe and graph editing tools for refining animations offline.Īs well as being widely used on YouTube, Character Animator has been used to produce US broadcast series like Our Cartoon President, and won a technical Emmy Award in 2020.

Generate puppet-style animation from video footage of an actor, complete with facial expressionsįirst released in 2017, Character Animator generates real-time puppet-style animation from video footage of an actor, complete with lip sync and facial expressions. Scroll down for news of the Character Animator 23.1 update.Īdobe has updated Character Animator, its real-time puppet animation softwareĬharacter Animator 23.0 adds a new built-in library of over 350 full-body mocap moves.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed